Last update: May 4, 2026 Reading time: 15 Minutes

By the time a buyer reaches your site, AI has often already pitched them on your competitor. That changes the game.

Traditional rankings still matter, but LLM SEO now decides whether models cite you when prospects research your category. So how do you stay visible? You need a sharper playbook.

At 2POINT, we treat LLM SEO as the foundation modern content sits on, not a side project. This guide shows you how to rank in AI Overviews, the signals that earn citations, and the LLM SEO strategy your brand needs to win in 2026.

LLM SEO is how you structure, format, and position your content so large language models cite and accurately represent your brand inside AI-generated answers. In other words, the goal has shifted.

You are no longer chasing a top-ten ranking. Instead, you are becoming the source the model pulls from when it replies to your buyer.

So how does this differ from keyword SEO?

Traditional SEO still works, but LLM optimization adds new layers, including entity signals, training-set visibility, retrieval-friendly formatting, and third-party trust signals. Answer engine optimization (AEO) overlaps here, too, especially for the conversational queries now feeding AI surfaces.

That said, both layers reinforce each other. The same fundamentals powering your AI search visibility also keep your traditional rankings strong.

Search no longer follows one path. Your buyers now blend Google queries, AI-generated answers, and direct prompts inside ChatGPT or Gemini across the same purchase journey.

Many of those sessions also end without a single click.

The data backs this up clearly. A Pew Research Center survey of 5,123 U.S. adults found that 34% have used ChatGPT, climbing to 58% among adults under 30. In other words, your buyers are already living inside AI conversations long before your remarketing tag ever sees them.

On top of that, Semrush analyzed 10 million keywords and found that AI Overviews appeared in roughly 16% of queries, with those queries having higher zero-click rates than standard SERPs.

If your top-of-funnel pages are slipping without a clear ranking drop, that is the zero-click shift hitting your reports. Fixing it starts with understanding the six signals models use to decide who gets cited.

When a model does not know who you are, it recommends someone else. That cost lands in three places.

In short, the B2B buyer journey now runs through AI surfaces, and invisibility there compounds quietly.

You don’t need a tool to diagnose this.

Just run five brand-relevant prompts inside ChatGPT, Gemini, Perplexity, and Google AI Overviews. Use queries your sales team hears weekly, like “best [category] for [use case]” or “alternatives to [competitor].”

If your name does not appear and a competitor’s does, you have an LLM SEO gap. From there, track each gap in a simple sheet and group them by query intent.

That list becomes input for your LLM SEO strategy. Skip this, and you risk creating content no one is asking for.

So how do you close that gap? It starts with understanding the mechanics. LLMs don’t crawl in real time the way a search engine does. Instead, they retrieve, score, and synthesize.

That shifts the signals that matter when you optimize content for LLMs. Six factors decide how llms rank content today.

First up: models do not see your brand as a string of text. Instead, they see you as an entity, the same way they recognize a person or a place.

So when your brand has a clean knowledge graph footprint, with consistent name, description, and category data across Wikipedia, Wikidata, LinkedIn, Crunchbase, and your own schema, models surface you more reliably.

On the flip side, weak or inconsistent entity data leaves the model guessing. And guessing models cite someone else.

Next up, your domain authority still matters, but the bigger lift comes from the trust ecosystem around it. Citations from established publications, mentions in expert roundups, and links from .edu and .gov sources all signal authority that an LLM is more likely to inherit.

In fact, the E-E-A-T principles Google rewards line up almost perfectly with what LLMs look for in content for AI citations.

Then there’s format. LLMs lift answers cleanly when your page is already shaped like one. So include a TL;DR block under your H1, a definition sentence in the opening paragraph, FAQ blocks at the end, and ordered lists for any process.

Otherwise, pages that read like long unbroken essays force the model to summarize on the fly, which is where weak LLM optimization quietly hands the win to a competitor.

Freshness comes next. For time-sensitive topics, LLMs prefer sources dated 2025 and 2026.

So add a “Last Updated” stamp to your pillar pages, refresh stats every six months, and date your data clearly.

Beyond that, specific numbers, methodologies, and named studies travel more effectively through retrieval pipelines than vague claims. That is why generic “experts say” filler keeps losing ground in AI content optimization.

Now for depth. LLMs reward sites that cover a topic exhaustively, and the pillar-and-cluster model that already serves your SEO does double duty here.

Specifically, a pillar page anchored by 8 to 12 supporting articles covering subtopics, FAQs, and adjacent angles signals real expertise. From there, a content audit helps you map the gaps, merge thin pages, and decide what new content for AI citations to commission next.

Finally, machine accessibility comes down to whether crawlers can reach you.

So make sure GPTBot, ClaudeBot, PerplexityBot, and Google-Extended are not blocked in your robots.txt, and you remove yourself from the citation pool entirely.

From there, an llms.txt file at your root proposes a clean map of your most quote-worthy content, though Ahrefs notes adoption across major LLMs is still uneven. So pair it with a strong schema (Article, FAQPage, Organization) to cover the basics that anchor any large-language-model SEO strategy at the technical layer.

Now you know the six signals. The playbook below is what our SEO team follows to take brands from invisible to consistently cited across AI surfaces. Run the steps in order, because skipping one breaks the rest.

Start with a baseline before any production work.

Pick 20 brand-relevant queries spread across decision-stage, comparison, and informational prompts that your buyers actually run. Then test each one inside ChatGPT, Gemini, Perplexity, and Google AI Overviews, and record whether you got cited, missed, or lost the spot to a competitor.

That single sheet tells you where your LLM SEO gaps lie and which queries deserve the most effort. It also gives leadership a baseline to compare against in 90 days, which is roughly when consistent work to optimize content for llms starts showing up in citation share.

Once you have your gap sheet, expand your seed terms into the conversational queries buyers actually type into AI tools. Use Semrush, Ahrefs, and ChatGPT in tandem here. The “fan-out” pattern matters: one head topic spawns dozens of long-tail prompts that hide your real opportunities for content for AI citations.

From there, map every prompt to a pillar or cluster page. Topics with the highest commercial value get pillar treatment, while supporting prompts become cluster posts that internal-link back. That structure is how to rank in AI overviews without producing fluff at the edges.

With your pillars mapped, audit your top-traffic pages with one question: could an LLM extract a clean answer from this in 30 seconds? Most pages fail that test.

The fix is to optimize content for LLMs the same way you would optimize for a featured snippet, with the answer up front and the proof underneath.

So rewrite your intros to lead with a definition sentence. Add a TL;DR block under every H1, convert your “about this guide” preamble into bullets, and add an FAQ block at the bottom of every pillar. Strip filler ruthlessly. The faster a model can find your answer, the more often it cites you.

Once your content reads cleanly for machines, the next move is letting them in. Decide which AI crawlers you want indexing your site, then write the rules clearly.

By default, we recommend allowing GPTBot, ClaudeBot, PerplexityBot, and Google-Extended on your editorial content, while blocking them from gated areas such as login pages or duplicate tag archives. From there, add an llms.txt file at your root pointing to your most citation-worthy assets.

Together, these moves close the technical gap most AI search optimization programs miss.

With access sorted, the schema is how machines confirm what your page actually is. So use JSON-LD to mark up the following on every editorial page:

For exact syntax, Google’s structured data documentation is the source of truth. From there, validate every page with the Schema Markup Validator before publishing, and audit quarterly so a CMS update doesn’t quietly break your markup.

Now you move off your own site. Create or claim Wikipedia entries where you qualify, fill out Wikidata, keep LinkedIn and Crunchbase profiles current, and book your founders on podcasts and panels in your category.

Each appearance reinforces the entity graph models’ reference when deciding who to cite. So while this is the slowest part of any LLM SEO strategy, it is also the part that compounds the most.

Skip it for a quarter, and you barely notice. Skip it for a year, and a competitor with weaker content starts outranking you in citations.

Once your entity is solid, the next layer is borrowed authority. LLMs do not invent it.

Instead, they inherit it from the publications, communities, and creators they were trained on. So Forbes, Harvard Business Review, niche industry publications, Reddit and Quora threads, and respected newsletters all feed the citation pool.

In fact, McKinsey found that just 5-10% of the references AI cites come from a brand’s own site. That is why a focused digital PR push at category-defining publications outperforms generic guest posting by a wide margin.

One placement in a respected outlet usually moves your LLM optimization needle further than ten links from anonymous blogs your audience never reads.

You ran the playbook, but citations still aren’t moving. Most stalled LLM SEO programs are doing the work. They’re just doing it wrong in one of four predictable ways.

Start here: LLM SEO is a layer, not a replacement. So if you spin up a separate “AI content” workstream while ignoring foundational SEO, your program plateaus fast.

Instead, your LLM SEO strategy compounds when it sits atop strong technical SEO, content depth, and link equity. Treat them as one program with two surfaces, not two budgets fighting each other.

Even with the right setup, generic content sinks the program.

Models don’t reward content that summarizes other content. Instead, they reward original data, expert quotes, proprietary frameworks, and named methodologies.

So if a five-prompt ChatGPT session could replace your post, it probably will be. Strong LLM optimization centers on the specific patterns models actually pull from in practice.

Even strong content fails if crawlers can’t reach it. Some teams block GPTBot or ClaudeBot in robots.txt out of a privacy or copyright reflex.

The result? You remove yourself from the citation set entirely.

Instead, decide deliberately which bots get access to which sections, and treat that as a strategic call, not a default no. AI content optimization can’t help a site that the model isn’t allowed to read.

Once crawlers are in, they look for who’s behind the words. Anonymous content reads as low-trust to both Google and LLMs.

So add author bylines to every post, then link each to a real bio page with credentials, publications, and external profiles. This is one of the fastest E-E-A-T wins you can ship in a single sprint, and it directly affects how LLMs rank content from your domain.

Beyond bylines, your data is the next signal. Producing content for AI citations means publishing things models can’t find anywhere else.

So original surveys, internal benchmarks, named frameworks, and dated case studies travel further than another summary of someone else’s research. If you have proprietary data sitting in a spreadsheet, that is your highest-leverage content for AI citations to ship next.

Once you’ve avoided the common mistakes, the next question is whether your work is paying off. Measuring AI search optimization is harder than SEO because the surfaces don’t share standard analytics. So the metrics that matter sit across three buckets:

Pair these with content marketing ROI tracking so attribution holds when the LLM did the heavy lifting upstream of the click.

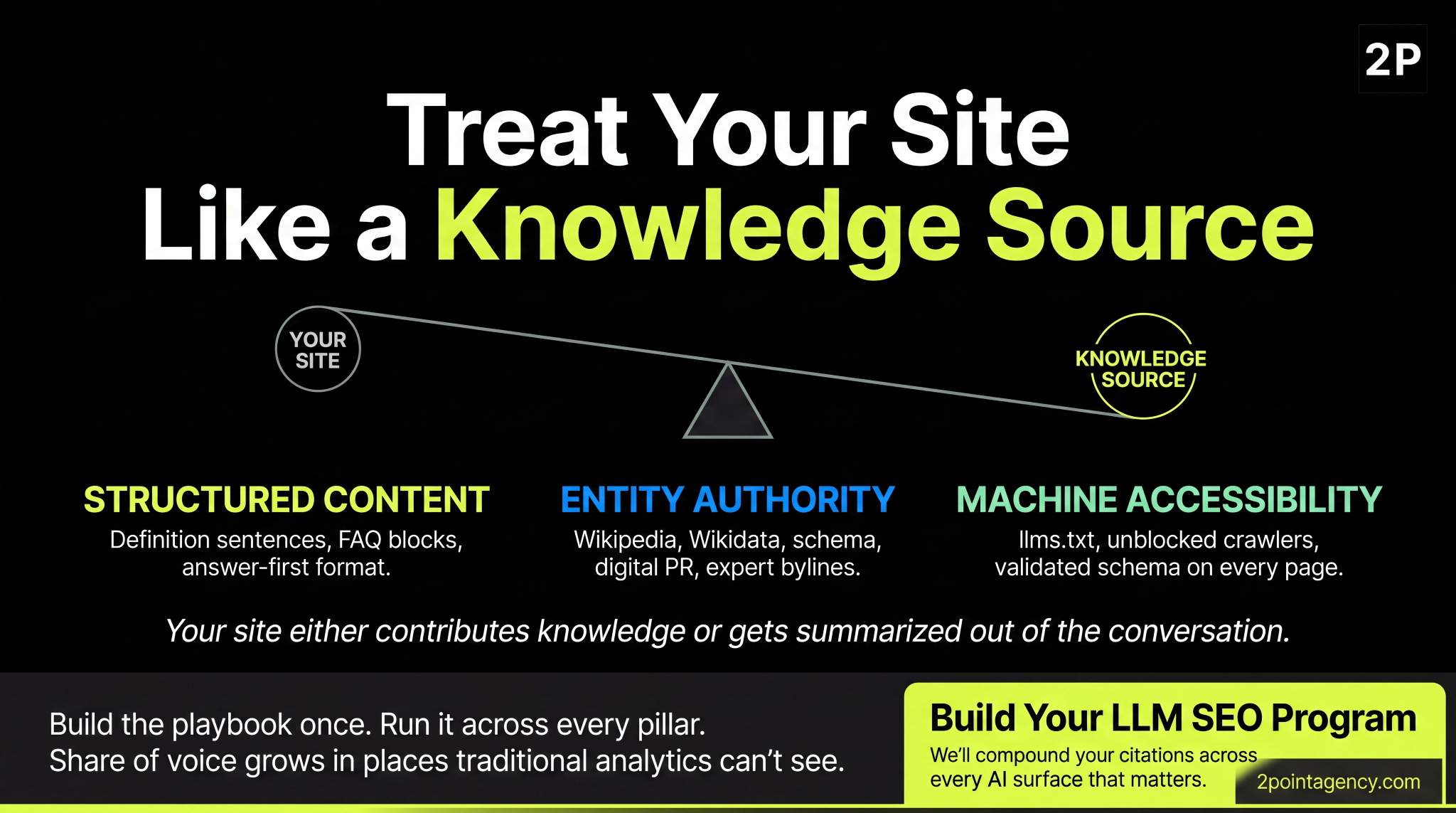

LLM SEO is the new default for content. Your site either contributes knowledge that builds trust, or it quietly gets summarized out of the conversations buyers are already having about your category.

Structured content, entity authority, and machine accessibility are what move the needle.

Build the playbook once, run it across every pillar, and your share of voice grows in places your traditional analytics can’t see. The earlier you ship the foundation, the more compounding you get back from every dollar spent on AI content optimization.

If your team is ready to make the shift, reach out to 2POINT, and we’ll help you build an LLM SEO program that compounds across every AI surface that matters.

Traditional SEO targets Google’s blue links through keywords and backlinks. LLM SEO focuses on being cited in AI responses from ChatGPT, Gemini, Perplexity, and Google AI Overviews. Both layers reinforce each other, so strong fundamentals make a large language model seo compound faster.

No, llm seo doesn’t replace traditional SEO. It sits on top of it. Strong technical SEO, content depth, and link authority anchor every llm seo strategy, so treat AI search optimization as an additional layer rather than a separate program competing for budget.

Start with ChatGPT, Gemini, Perplexity, and Google AI Overviews, since they cover most AI search traffic in 2026. Run baseline citation audits across all four, prioritize the platform driving pipeline impact, then expand large language model seo once your LLM optimization workflow is consistent.

Most brands see early citation lifts within 60 to 90 days of restructuring content, adding schema, and earning two or three category-relevant placements. Compounding authority takes 6 to 9 months. So, how to rank in AI overviews is mostly about depth and freshness.

Llms.txt is a plain-text file at your site root that tells AI crawlers which content you want surfaced for AI citations. Adoption across major models is still uneven, but adding one costs nothing if you publish thought leadership and ship one.

What is LLM SEO, and why does it suddenly matter? Search just changed under your feet. AI tools now answer first, and only sometimes send a click

Local search advertising represents the most direct path between consumer intent and business revenue in digital marketing. Here’s what you need to know: Local search ads are geographically-targeted advertisements appearing when users search for nearby businesses or services on platforms like Google Maps, Google Local Services Ads, and Apple Maps. The market has reached $182 […]

What is Local SEO and Why Does It Matter? Local SEO is the strategic practice of optimizing your digital presence to attract customers searching for businesses, products, or services in specific geographic areas. Here’s what you need to know: Local SEO focuses on appearing in Google’s Map Pack (the top three local business results) and […]